Using AI to Moderate High-Risk In-App Communities Without Compromising Privacy

In high-risk sectors such as fintech, trading, sports, and live competitive gaming, product managers may find that the addition of a social feature is a double-edged sword.

On the one side, you have engagement numbers that indicate users who engage with it have a much lower rate of churning. On the other side, you have what can be considered a nightmare scenario, and that is having a platform that is a breeding ground for scams, harassment, or a “pump and dump” scheme.

The traditional answer to this problem was manual moderation. However, manual moderation becomes unfeasible when 50,000 users respond to either a goal or a market crash at the same time. The other alternative to this problem, which involves simple keyword filtering (“bad word lists”), can be easily circumvented by bad actors, not to mention frustrating users in the process.

The new paradigm demands a system that is cognizant of context, functions on a millisecond timescale, and, most importantly, safeguards user privacy during this process. For many high-risk platforms, this means moving from manual review and simple keyword lists to AI chat moderation systems that can make decisions in real time. Here is how advanced AI is working to address the safety paradox on high-risk platforms.

The Problem of Keyword Filters in High-Context Situations

In a high-risk community, the difference between a safe and dangerous message can be context, and not vocabulary.

- False Positives. It is normal for someone to talk about a “kill” in an online game, but not about violence. A keyword filter would catch both.

- Scam Problem. Scammers often avoid obvious profanity. They use polite speech to lure users off-platform. Simple filters miss this altogether, while contextual AI models understand the intention and pattern of a scam.

- Speed. Harm in real-time situations like sports or finance happens in seconds. If a negative comment stays visible while a human checks it, the experience is already broken.

Protecting PII: Masking at Source

One of the major risks of compliance in fintech and social apps has to do with the unintended disclosure of Personally Identifiable Information (PII). Users may inadvertently attempt to share a phone number or wallet address, without realizing the implications of such actions in terms of security.

Current advanced moderation systems have a “Masking Layer” that is placed just prior to the public stream:

- Automatic Detection. It automatically detects patterns that correspond to credit cards, phone numbers, or email addresses.

- Instant Redaction. The information is redacted instantly with asterisks replacing the numbers so that this information is never revealed to the public.

- Privacy Preservation. This feature helps users express themselves freely without putting the service at risk for a GDPR breach or data security violation on account of the users.

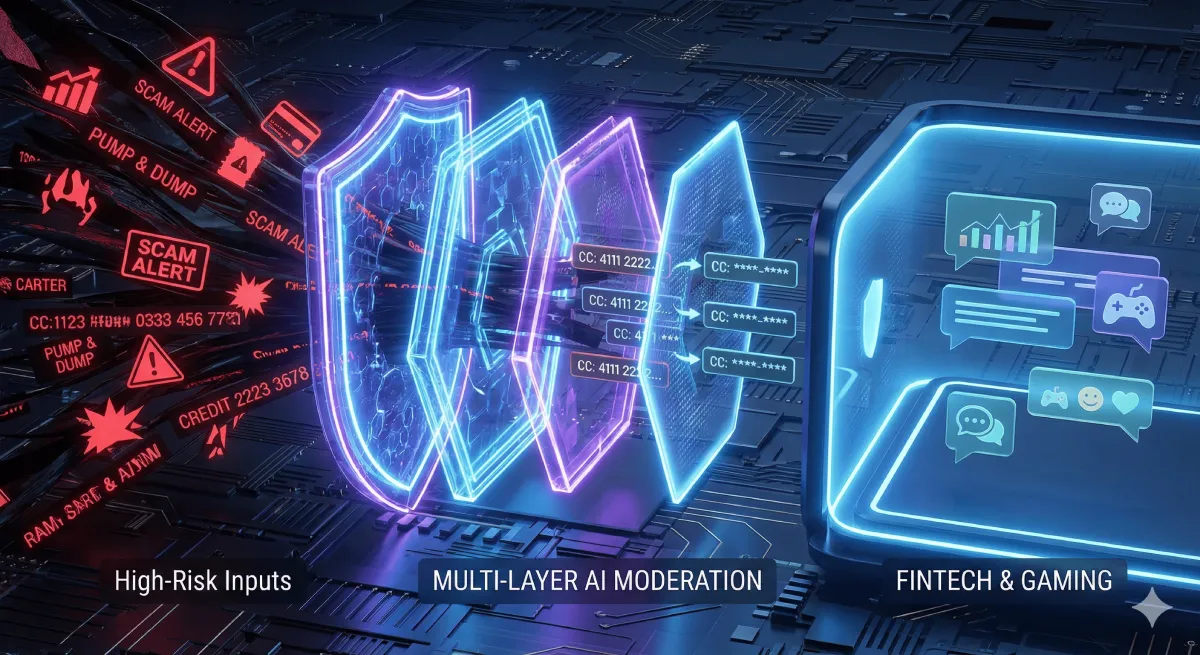

The “Defense in Depth” Architecture

Safety in high-risk applications cannot rely on one algorithm only. At watchers.io, we use a 5-layer moderation system to provide redundancy and improve accuracy:

- Pre-Moderation (The Hard Filter). A first level that filters out obvious derogatory words or clearly unacceptable words before further processing. It helps to inform users that the rules exist.

- Masking (Privacy Layer). Automatically hides personal and financial data to protect from fraud and doxxing. PII is hidden when sending a message and remains invisible even to admins.

- The Core. The AI models are built as a cascade and assess the text and image sentiment and context. The system takes milliseconds to analyze. The layer distinguishes between heated arguments (common in sports/trading) and threats of violence.

- User Self-Regulation. Users have mechanisms to conceal content they do not like or alert others to bad actors. If several people flag an account, the system can automatically limit that user’s ability to post. Users can also hide other participants they do not like which is especially relevant for sports and video games.

- Manual Review. The final escalation level for appeals or borderline situations.

Conclusion: Safety is a Retention Metric

Historically, moderation had been viewed as a cost center, something you had to pay for to prevent problems. But safety is now a product feature for high-risk apps. If your community makes your users feel safe from scams and abuse, they will stick around. But if your community inundates them with spam, they will flee.

By incorporating a moderation system that utilizes AI and a privacy-first approach, you can transform your community from a potential risk into a safe and thriving asset that allows your users to share knowledge and experiences without constantly worrying about scams and abuse.

Leave a Reply