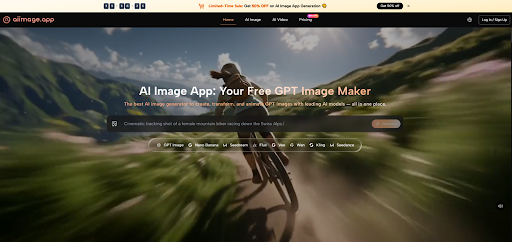

Why I Chose an AI Image Platform Based on Consistency, Not Just One Stunning Image

I remember the exact moment I almost made the wrong decision. I had test results from six AI image platforms open in separate tabs, and one of them had just generated a cinematic portrait that stopped me mid-sentence. The lighting, the skin texture, the depth of field, everything looked like a frame from a high-budget film. For about ten minutes, I was convinced that I had found my tool. Then I tried to generate twenty more images with coordinated prompts for a visual storyboard, and the magic unraveled. That experience forced me to rethink what I was actually choosing. I wasn’t picking a single-image vending machine. I was picking a workspace I would return to several times a week, and that realization led me to spend more time with AI Image Maker than I initially planned. The decision was less about peak performance and more about what I could trust at 11 PM on a Tuesday.

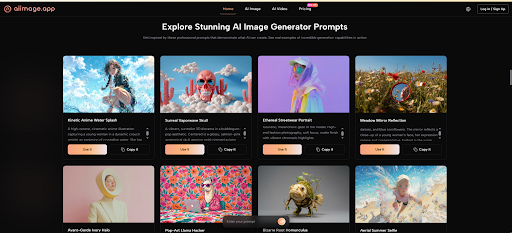

The Five Dimensions That Matter More Than a Hero Shot

Most online comparisons zoom in on image quality as if it were the only axis that counted. I understand why. A side-by-side image gallery is easy to scan, and the platform with the most visually arresting example looks like the winner. But when you are choosing a tool for ongoing work, four other dimensions start to carry equal weight: how fast the tool responds when you are in a flow state, whether the interface protects you from ad-like noise, how actively the platform updates its models without disrupting your saved workflows, and whether the visual layout helps you focus or subtly scatters your attention. I gave each dimension a weight and scored six platforms on a scale from 0 to 10. The table below is the result of that multi-factor assessment, and I tried to score honestly even when my favorite platform didn’t lead a category.

| Platform | Image Quality | Loading Speed | Ad Distraction | Update Activity | Interface Cleanliness | Weighted Overall |

| AIImage.app | 8.5 | 9.0 | 9.5 | 8.5 | 9.0 | 8.9 |

| Midjourney | 9.5 | 7.5 | 8.5 | 9.0 | 7.0 | 8.3 |

| Leonardo AI | 8.5 | 8.0 | 7.0 | 8.5 | 7.5 | 7.9 |

| Adobe Firefly | 8.0 | 8.0 | 8.5 | 8.0 | 8.0 | 8.1 |

| Krea | 8.0 | 7.5 | 7.5 | 8.0 | 7.5 | 7.7 |

| Freepik AI | 7.5 | 8.0 | 6.0 | 7.5 | 7.0 | 7.2 |

Midjourney still wins on pure image quality for many photographic and artistic prompts, and anyone who denies that is ignoring a lot of visual evidence. However, its interface, which relies on a chat platform, consistently pulled my attention into channels and threads that had nothing to do with my current project. That design choice is not a flaw for everyone, but for me it added a layer of ambient distraction that I could not justify during focused work. Leonardo AI offered impressive model diversity, and I almost chose it as my primary tool. The reason I didn’t came down to a subtle feeling of visual busyness in its dashboard that I never fully got used to. Adobe Firefly felt safe and legally clearer for commercial work, but its update cadence felt tied to a much larger suite, and I sometimes worried that standalone image generation improvements would arrive more slowly than on nimbler platforms. Krea’s real-time canvas was genuinely fun for exploration, though its server responsiveness during peak hours occasionally dipped. Freepik AI provided a decent integrated resource library, but the promotional nudges for other Freepik products made it feel less like a dedicated image workspace.

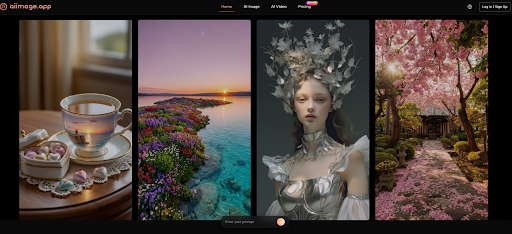

What Consistency Looked Like Across a Full Testing Week

When I dug deeper into AIImage.app, I was not looking for a tool that would wow me on the first prompt. I wanted something that would produce dependable results across thirty prompts and let me compare outputs without leaving the generation view. The platform places GPT Image 2 in a prominent position as a model designed for structured, high-detail generation, and I leaned on it for scenes that demanded spatial accuracy. A test batch of isometric room designs came back with noticeably fewer perspective drift issues than similar batches I ran through two other tools. That doesn’t mean every image was gallery-ready. Some compositions felt a little too safe, lacking the unexpected texture choices that sometimes appear on platforms with looser model behaviors. But the safe bet won out in my decision because I needed a foundation I could build on, not a surprise that would take an hour to correct.

Breaking Down the Scoring Logic in More Detail

I want to explain the scoring philosophy behind the table so it doesn’t look like numbers pulled from a hat. Image Quality was judged on structural accuracy, lighting coherence, and how well the output matched the intent of the prompt across multiple categories. Loading Speed reflected not just model inference but the entire round-trip experience from prompt submission to a viewable result. Ad Distraction measured how often promotional elements, upsells, or community feeds interrupted the core workflow. Update Activity captured how frequently I noticed meaningful model improvements or new model availability without requiring a complete workflow reset. Interface Cleanliness assessed visual noise, logical button placement, and the number of clicks needed to reach the generation panel.

Why Ad Distraction Became My Unexpected Dealbreaker

I didn’t expect Ad Distraction to dominate my evaluation this much. Before this round of testing, I assumed I could tolerate a few banners in exchange for higher image quality. But in practice, even small interruptions compound when you are iterating rapidly. A full-screen upgrade prompt that blocks the generation view once per session might cost only five seconds, but it also resets your short-term working memory of what you were about to type. By the end of a long session on a cluttered platform, I noticed I was making more prompt errors and accepting mediocre outputs just to finish. The near absence of such interruptions on AIImage.app didn’t make the images prettier, but it kept my own thinking clearer, and that improved the work more than a sharper render ever could.

The Workflow Steps I Found Myself Repeating

The platform’s creation structure is straightforward, and I preferred it because it didn’t introduce extra decision points that my tired brain had to parse.

- Choose the image generation or image editing creation path on the homepage.

- Enter a prompt, and optionally upload a reference image for style or composition guidance.

- Select a model that fits the visual goal of the current task.

- Generate, examine the results, download the usable ones, and refine the prompt for any that missed the mark.

This loop took me from an idea to a shortlist in under a minute for simpler prompts, and a few minutes for more complex multi-object scenes. That speed wasn’t miraculous: it was the result of an interface that didn’t demand extra navigation between steps.

Acknowledging the Trade-Offs I Made

I need to be clear about what I sacrificed by prioritizing consistency over peak artistry. There were moments when I saw a Midjourney output from a colleague and felt a small pang of visual jealousy. The texture and mood that platform can pull out of a single prompt remain genuinely impressive. I also occasionally missed Leonardo AI’s fine-tuning depth when I wanted to nudge a model in a very specific stylistic direction. AIImage.app’s model roster is capable and varied, but some of the more experimental or highly stylized model behaviors available elsewhere are not its core strength. Additionally, while I tested the video-related creation entries, they did not form a major part of my evaluation because my workload was image-heavy. Someone whose primary output is short video clips would need to run their own battery of tests.

Who This Platform Makes the Most Sense For

I didn’t end up recommending this platform to everyone. Some people genuinely need the absolute highest possible image quality on a single output, and they are willing to manage the friction that comes with that priority. Others are deeply embedded in a design ecosystem where integration matters more than standalone interface cleanliness. But for the wide middle of creators, marketers, and small teams who generate images frequently and can’t afford to have their attention splintered by their tools, the math shifts. A platform that consistently finishes in the top tier across all dimensions, without a glaring weak spot, often outperforms a specialist that wins one column but loses two. In my own work, I found that AIImage.app gave me back more creative stamina at the end of a long day, and that turned out to be the deciding factor no screenshot comparison could capture. My advice, if you’re stuck between multiple options, is to run a high-volume test, not a one-shot beauty contest. You might find, as I did, that the platform you actually want to open again tomorrow is not the one that wowed you yesterday.

Leave a Reply